As part of the Theoretical Research team at Bitdefender, I am working on fundamental research problems, focusing on anomaly detection and robustness to distribution shifts. In 2022 I received my PhD degree from the University Politehnica of Bucharest under the supervision of Marius Leordeanu and Adina Magda Florea. During my PhD, I've worked on the challenging problem of unsupervised learning from visual data, with my thesis entitled "Unsupervised Visual Learning by Exploiting the Spatio-Temporal Consistency of Highly Probable Positive Features". I've also obtained my MSc in Artificial Intelligence and my BSc in Computer Science and Information Technology from the University Politehnica of Bucharest.

My research interests concern unsupervised/self-supervised learning, anomaly detection, and generalization under distribution shifts.

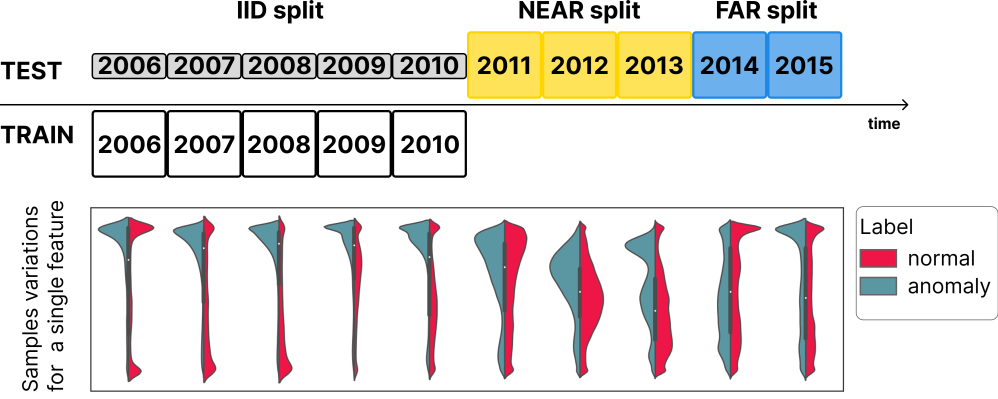

Analyzing the distribution shift of data is a growing research direction in nowadays Machine Learning (ML), leading to emerging new benchmarks that focus on providing a suitable scenario for studying the generalization properties of ML models. The existing benchmarks are focused on supervised learning, and to the best of our knowledge, there is none for unsupervised learning. Therefore, we introduce an unsupervised anomaly detection benchmark with data that shifts over time, built over Kyoto-2006+, a traffic dataset for network intrusion detection. This type of data meets the premise of shifting the input distribution: it covers a large time span (10 years), with naturally occurring changes over time (e.g. users modifying their behavior patterns, and software updates). We first highlight the non-stationary nature of the data, using a basic per-feature analysis, t-SNE, and an Optimal Transport approach for measuring the overall distribution distances between years. Next, we propose AnoShift, a protocol splitting the data in IID, NEAR, and FAR testing splits. We validate the performance degradation over time with diverse models, ranging from classical approaches to deep learning. Finally, we show that by acknowledging the distribution shift problem and properly addressing it, the performance can be improved compared to the classical training which assumes independent and identically distributed data (on average, by up to 3% for our approach).

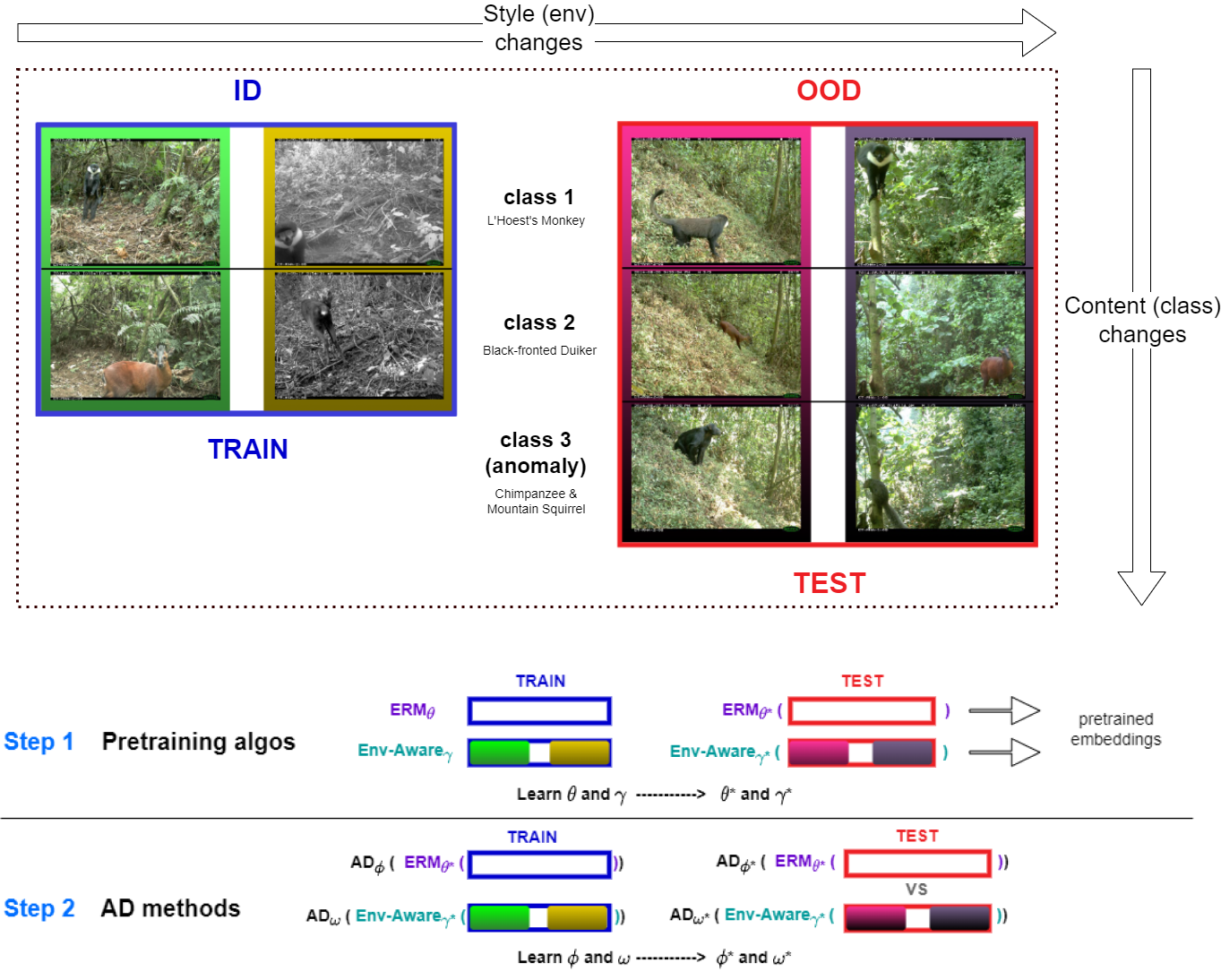

We introduce a formalization and benchmark for the unsupervised anomaly detection task in the distribution-shift scenario. Our work builds upon the iWildCam dataset, and, to the best of our knowledge, we are the first to propose such an approach for visual data. We empirically validate that environment-aware methods perform better in such cases when compared with the basic Empirical Risk Minimization (ERM). We next propose an extension for generating positive samples for contrastive methods that considers the environment labels when training, improving the ERM baseline score by 8.7%.

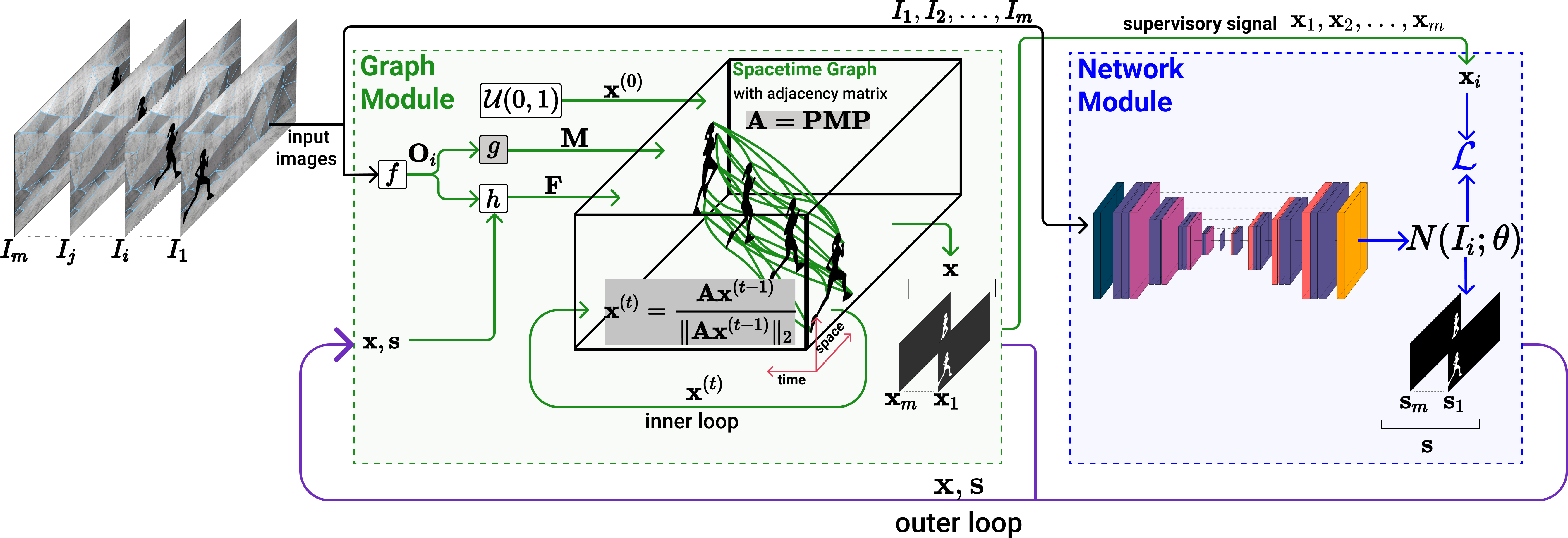

We propose a dual system for unsupervised object segmentation in video, which brings together two modules with complementary properties: a space-time graph that discovers objects in videos and a deep network that learns powerful object features. The system uses an iterative knowledge exchange policy. A novel spectral space-time clustering process on the graph produces unsupervised segmentation masks passed to the network as pseudo-labels. The net learns to segment in single frames what the graph discovers in video and passes back to the graph strong image-level features that improve its node-level features in the next iteration. Knowledge is exchanged for several cycles until convergence. The graph has one node per each video pixel, but the object discovery is fast. It uses a novel power iteration algorithm computing the main space-time cluster as the principal eigenvector of a special Feature-Motion matrix without actually computing the matrix. The thorough experimental analysis validates our theoretical claims and proves the effectiveness of the cyclical knowledge exchange. We also perform experiments on the supervised scenario, incorporating features pretrained with human supervision. We achieve state-of-the-art level on unsupervised and supervised scenarios on four challenging datasets: DAVIS, SegTrack, YouTube-Objects, and DAVSOD.

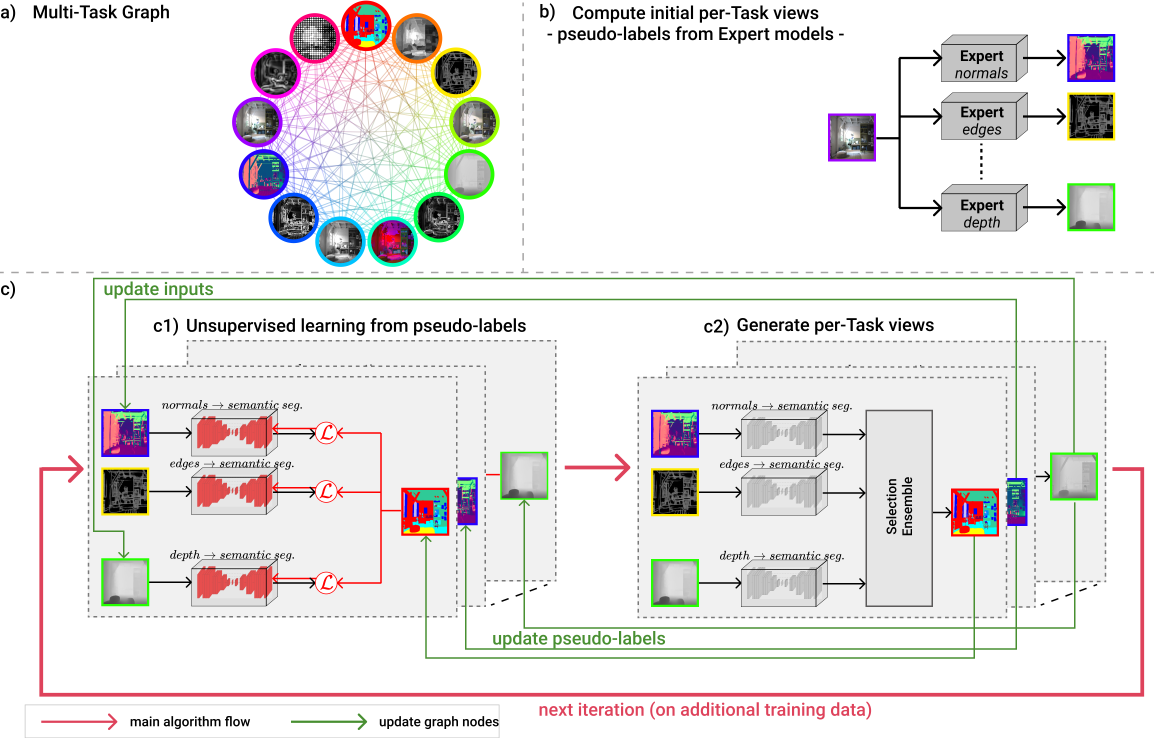

The human ability to synchronize the feedback from all their senses inspired recent works in multi-task and multi-modal learning. While these works rely on expensive supervision, our multi-task graph requires only pseudo-labels from expert models. Every graph node represents a task, and each edge learns between tasks transformations. Once initialized, the graph learns self-supervised, based on a novel consensus shift algorithm that intelligently exploits the agreement between graph pathways to generate new pseudo-labels for the next learning cycle. We demonstrate significant improvement from one unsupervised learning iteration to the next, outperforming related recent methods in extensive multi-task learning experiments on two challenging datasets.

We address an essential problem in computer vision, that of unsupervised foreground object segmentation in video, where a main object of interest in a video sequence should be automatically separated from its background. An efficient solution to this task would enable large-scale video interpretation at a high semantic level in the absence of the costly manual labeling. We propose an efficient unsupervised method for generating foreground object soft masks based on automatic selection and learning from highly probable positive features. We show that such features can be selected efficiently by taking into consideration the spatio-temporal appearance and motion consistency of the object in the video sequence. We also emphasize the role of the contrasting properties between the foreground object and its background. Our model is created over several stages: we start from pixel level analysis and move to descriptors that consider information over groups of pixels combined with efficient motion analysis. We also prove theoretical properties of our unsupervised learning method, which under some mild constraints is guaranteed to learn the correct classifier even in the unsupervised case. We achieve competitive and even state of the art results on the challenging Youtube-Objects and SegTrack datasets, while being at least one order of magnitude faster than the competition. We believe that the strong performance of our method, along with its theoretical properties, constitute a solid step towards solving unsupervised discovery in video.

October 2022

July 2022 InfoEducatie

January, 2022 University Politehnica of Bucharest

November, 2021 Romanian AI Days 2021

October 2019, Bucharest Deep Learning Meetup

October 2019, Bucharest Computer Vision Reading Group, Institute of Mathematics of the Romanian Academy

September 2019, Bucharest Computer Vision Reading Group, Institute of Mathematics of the Romanian Academy

June 2019, Bucharest Computer Vision Reading Group, Institute of Mathematics of the Romanian Academy

June 2019, RAAI 2019

April 2018, Bucharest Computer Vision Reading Group, Institute of Mathematics of the Romanian Academy

March 2018, Bucharest Computer Vision Reading Group, Institute of Mathematics of the Romanian Academy

May 2017, Bucharest Computer Vision Reading Group, Institute of Mathematics of the Romanian Academy

October 2016, Bucharest Computer Vision Reading Group, Institute of Mathematics of the Romanian Academy

December 2022, NeurIPS 2022 Datasets and Benchmarks

December 2022, NeurIPS 2022 - Workshop on Distribution Shifts: Connecting Methods and Applications

November 2021, BMVC 2021

November 2021, Romanian AI Days

July 2019, EEML 2019

July 2018, TMLSS 2018

October 2017, ICCV 2017